Emojis at #useR2017

Because of imminent birth of my 2nd daughter, I did not get to go to useR, so I’ve been feeling a mix of frustration, impatience and fomo. I was really pleased to find out that for the second year, part of the conference would be streamed live and most of it will be available later.

#user2017 will be livestreamed starting Weds. Tomorrow's tutorials will be recorded. Details and schedule: https://t.co/bqnH0pYs2R #rstats

— David Smith (@revodavid) July 3, 2017

I combined watching the live stream and constantly update the column with the #useR2017 filter on

my tweetbot, until this started to be overwhelming enough for me to come up with a way

to visualise the tweet storm from a few angles. This was a good opportunity

to learn the rtweet package to grab the tweets, and

shinydashboard for the visualisation.

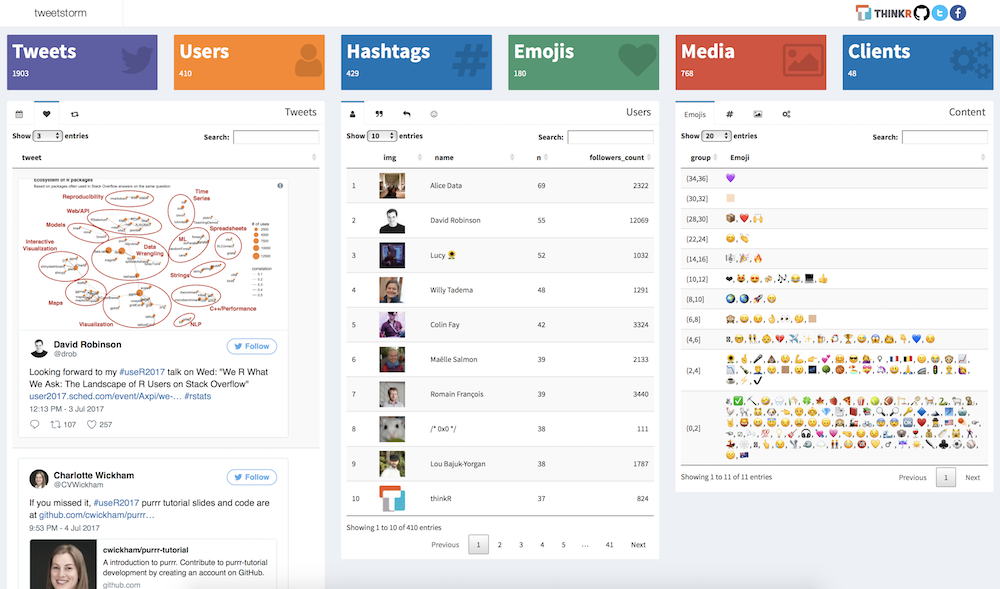

A few hours and a few tweets later, we released this as the

pet project tweetstorm, a shiny dashboard to glimpse activity on

twitter, to answer some questions:

- How many tweets from how manuy twitter users

- Related hashtags

- Which tweets were the most fav’ed or retweeted

- Who tweeted the most, was quoted or replied to the most

- …

Some of use use emojis in our tweets, and I’ve learned from Sean Kross how to extract them from the tweet, borrowing a regex from his article: Which Emojis Does Lucy Use in Commit Messages?

See: https://t.co/azdxMF9WtB

— Sean Kross (@seankross) July 6, 2017

And: https://t.co/jyt5JoMNyD

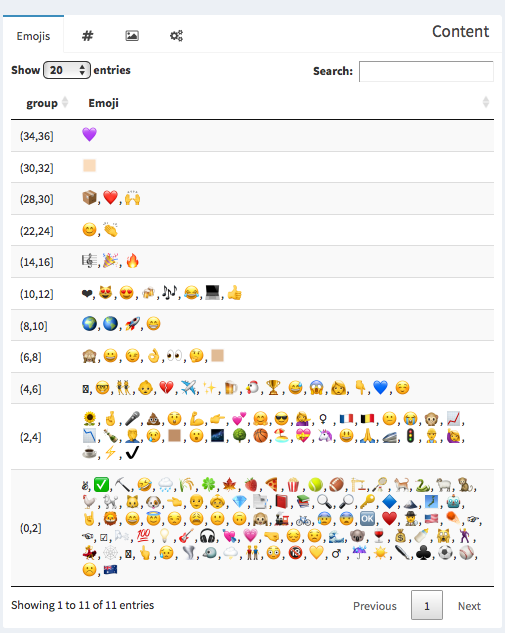

There are now two emojis things in the app, the first one to appear packs emojis together based on how many times they have

been used, through the tweetstorm::extract_emojis function.

#' Extract emojis

#'

#' @param text text with emojis

#'

#' @return a tibble with the columns `Emoji` and `n`

#'

#' @references

#' Inspired from [](http://seankross.com/2017/05/30/Which-Emojis-Does-Lucy-Use-in-Commit-Messages.html)

#'

#' @export

#' @importFrom stringr str_extract_all str_split

#' @importFrom tibble as_tibble

#' @importFrom magrittr %>% set_names

extract_emojis <- function(text){

str_extract_all(text, emoji_regex ) %>%

flatten_chr() %>%

str_split("") %>%

flatten_chr() %>%

not_equal("-") %>%

table() %>%

sort(decreasing = TRUE) %>%

as_tibble() %>%

set_names( c("Emoji", "n") )

}

There’s still some work to deal with skin tone modifiers, but it’s great to see that the most popular emojis spread love and package use.

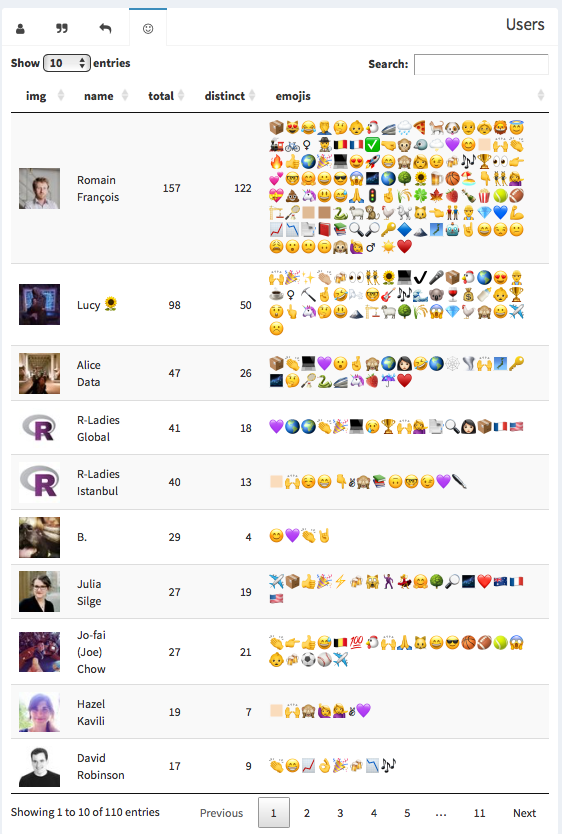

The other more emoji related display on the app groups them by users, so that we can verify

that Lucy also makes extensive use of emojis on twitter. That’s the job

of the tweetstorm::extract_emojis_users function:

#' Extract information about users that use emojis

#'

#' @param tweets tweets data set

#' @export

extract_emojis_users <- function(tweets){

data <- tweets %>%

select( user_id, text ) %>%

mutate(

emojis = str_extract_all(text, emoji_regex ) %>% map( not_equal, "-" )

) %>%

filter( map_int(emojis, length) > 0 ) %>%

group_by( user_id ) %>%

summarise(

emojis = map(emojis, ~ flatten_chr(str_split(., "") ) ) %>% flatten_chr() %>% table() %>% list()

) %>%

mutate(

total = map_int(emojis, sum),

distinct = map_int(emojis, length),

emojis = map_chr( emojis, ~ paste( names(.)[ order(., decreasing = TRUE)], collapse = "") )

) %>%

arrange( desc(total) )

left_join( data, lookup_users(data$user_id), by = "user_id" ) %>%

mutate( img = sprintf('<img src="%s" />', profile_image_url ) ) %>%

select( img, name, total, distinct, emojis )

}

I am first there, but that’s not fair because at some point while developping the app I tweeted the list of all the emojis then used so far.

#useR2017 emojis: 💜😊🏻🙌📦👏🔥👍😻😂🌍🎉💻😍🤔🚀😁🙈🇧🇪👩😉🍻🎶🏆👀👉👶💕🤓🤗😀😎😱🌌🌎🌳🌻🍺🏀🏖👇👯💁💝💩🦄😃😅🙏🚄🚦🤞🇫🇷🌧🌾🍀🍁🍓🍕🍾🍿🎾🏈🏗🏸🏼🏽🐈🐍🐑🐒🐓🐔🐩🐱🐶👈👬👴👵👷💎💙💪📈📉📑📕📚🔍🔎🔑🔷🕵️🗻🗾🤖🤘🦁😄😇😒😕😩😮🙁🙃🙉🙋🚂🚲🤦

— Romain François 🦄 (@romain_francois) July 7, 2017

But apart from that, there’s no doubt that Lucy uses lots of emojis:

Here’s an example:

so grateful for inclusion initiatives at #useR2017 including

— 𝓛𝓾𝓬𝔂::maternity_leave🤱 (@LucyStats) July 7, 2017

👯 newbie session

💰 diversity scholarships

🍼 breast feeding area

👶 child care

The app is live here, it may still change and/or move to a new url at some point.

Right now it always uses the data I have extracted between these two instants before it’s too late.

> tweetstorm::useR2017 %>% pull(created_at) %>% range

[1] "2017-06-29 10:07:14 UTC" "2017-07-11 09:55:06 UTC"

I’ll keep updating the dataset for some time as there are likely to have new tweets, e.g. when we go through the slides or videos, …